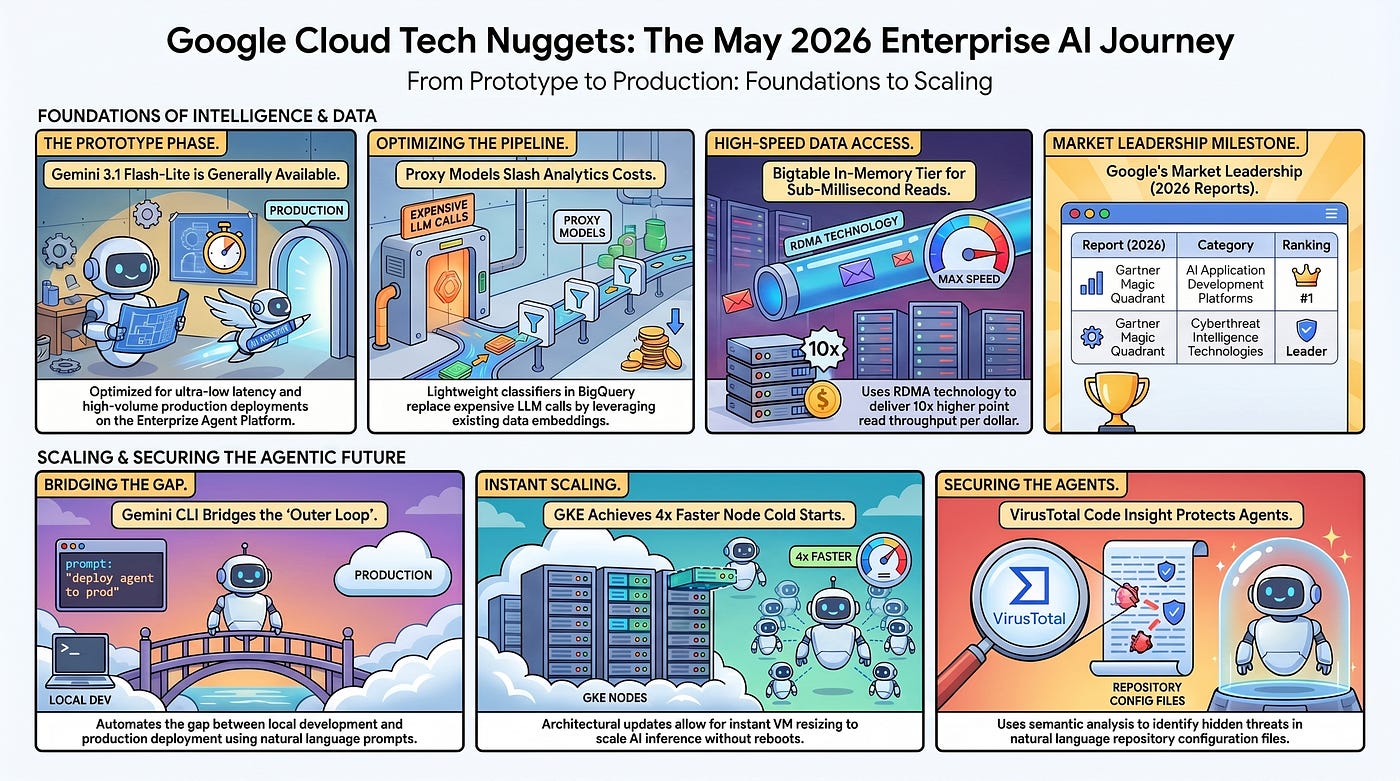

Google Cloud Platform Technology Nuggets — May 1–15, 2026

Welcome to the May 1–15, 2026 edition of Google Cloud Platform Technology Nuggets. The nuggets are also available on YouTube.

AI and Machine Learning

Gemini 3.1 Flash-Lite is now generally available on the Gemini Enterprise Agent Platform. It is a model specifically optimized for ultra-low latency and high-volume production deployments. This addition to the Gemini 3 series focuses on providing precision for agentic tasks such as tool calling, orchestration, and automated pipeline execution while minimizing operational costs. Check out the blog post.

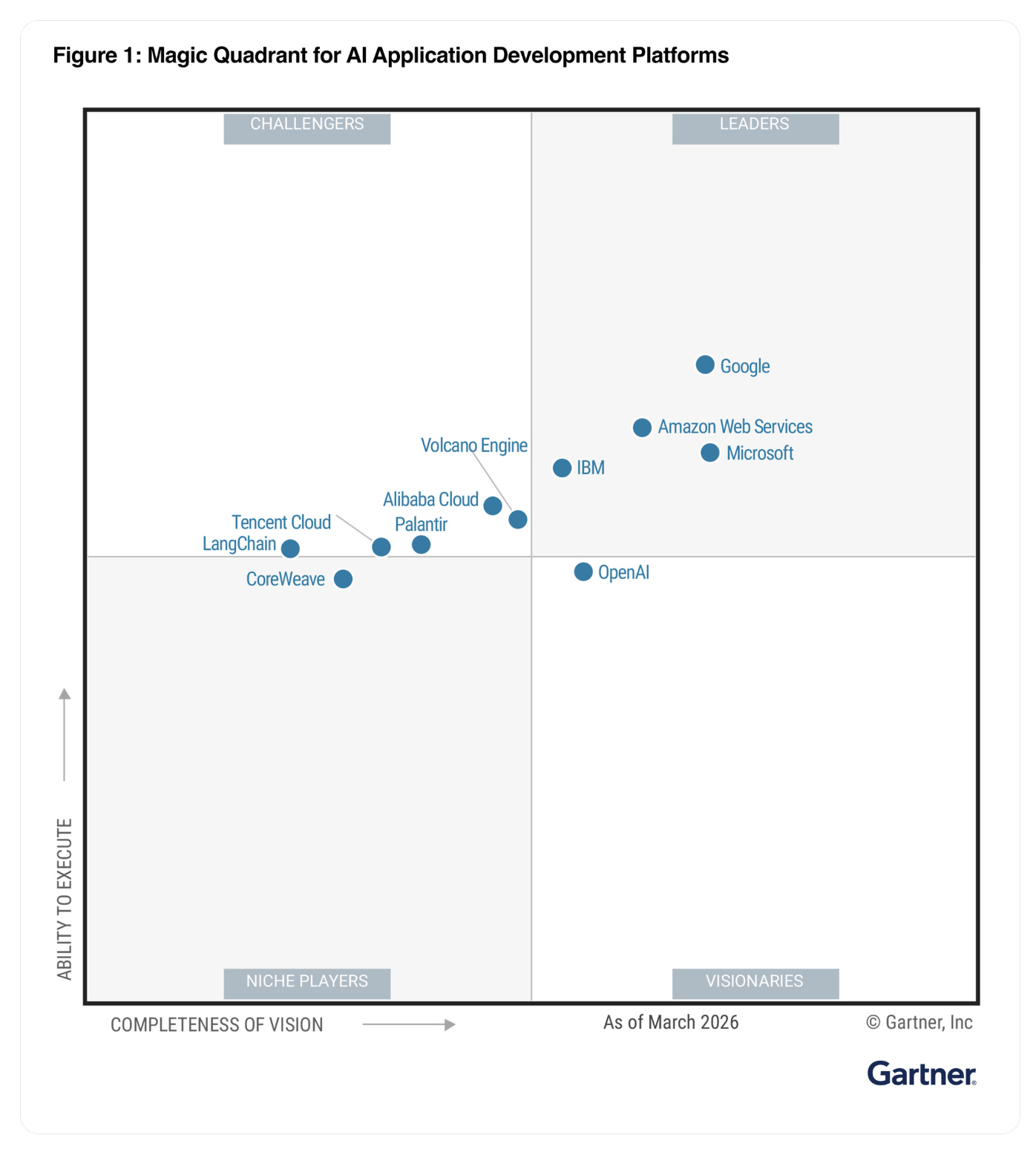

In a mid-cycle update of the Gartner® Magic Quadrant™ for AI Application Development Platforms, Google has been given the #1 ranking across the three use cases assessed in the associated Critical Capabilities report. Download a complimentary copy of the 2026 Gartner Magic Quadrant update here.

Data Analytics

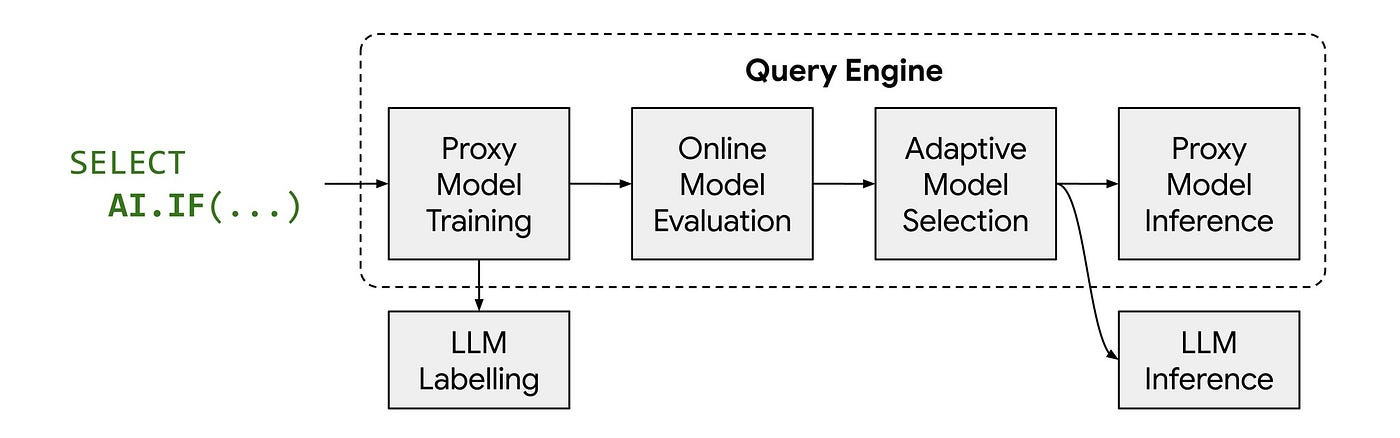

While AI-powered SQL functions are a solid addition to the databases, cost and latency of these functions is a critical factor. An interesting approach called proxy models is now available, which are lightweight classifiers like logistic regression that replace the majority of expensive LLM calls during query execution. These models work by leveraging existing Gemini embeddings of data to identify semantic patterns, allowing the system to perform tasks like binary classification (AI.IF) or multiclass classification (AI.CLASSIFY) on the CPU rather than specialized hardware. The process involves an automated workflow where the engine samples a small portion of data, labels it using an LLM, trains a specialized proxy model on-the-fly, and evaluates its accuracy before deciding whether to use the proxy or the full LLM. This optimization is currently available in BigQuery and AlloyDB. Check out the blog post.

Databases

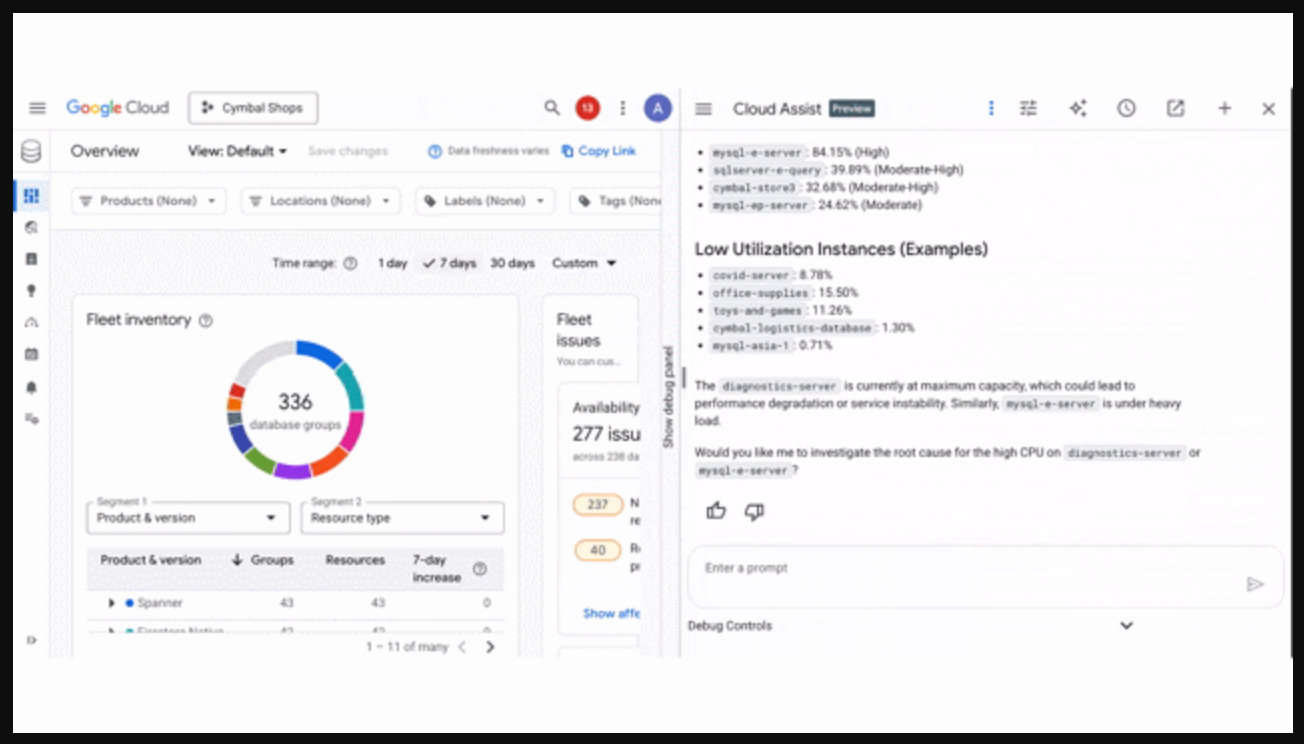

The Database Center has been infused with several AI-driven updates to assist with the management of large-scale database fleets. The platform now features Gemini-powered intelligence for fleet-wide performance analysis, a natural language chat interface for troubleshooting across services like Cloud SQL and Spanner, and a testing agent to validate the impact of recommendations on latency and throughput before they are applied. Technical enhancements include public APIs integrated with the Model Context Protocol (MCP) for developer tool integration, centralized slow query analysis, and the inclusion of BigQuery inventory and data affiliation to map dependencies between transactional and analytical systems. Check out the blog post.

Bigtable has introduced Bigtable in-memory tier, a new feature within the Enterprise Plus edition designed to provide sub-millisecond read latency by using a hybrid storage architecture. This tier utilizes Remote Direct Memory Access (RDMA) to transfer data directly from memory without involving the system’s CPU or operating system, which helps avoid performance bottlenecks during traffic spikes. It offers approximately 10x higher point read throughput per dollar compared to standard configurations and provides hotspot resistance by supporting up to 120,000 queries per second on a single row. Check out the blog post.

PostgreSQL 18 is now available in in AlloyDB alongside a new Extended Support offering for older versions. This update includes B-tree skip scans for faster data retrieval, parallel GIN index usage for JSON and full-text searches, and native UUIDv7 support for better indexing efficiency in distributed applications. The platform now allows for virtual generated columns to compute data on-the-fly without using additional disk space. For older versions ranging from PostgreSQL 14 to 17, Extended Support provides three additional years of critical security patches and bug fixes beyond the community end-of-life dates. Users can perform in-place major version upgrades to transition to these newer releases. Check out the blog post.

As always, if you are looking to bookmark a single link that covers whats new in Google Data Cloud, check this out.

Developers & Practitioners

The Gemini Enterprise Agent Platform is squarely focused on running, scaling, governing and optimizing agents in production. To do that, requires some key guidance from Google Cloud and that is now available in the form of 5 guides that help transition AI agents from prototypes to production:

Long-Running Agents: Utilize Agent Runtime for maintaining state up to seven days, enabling checkpoint-and-resume and human-in-the-loop approval workflows.

Agent Governance Stack: Implement a five-layer security framework using Agent Identity for isolation, Agent Registry for tool control, and Agent Gateway for policy enforcement.

Multi-Agent Orchestration: Use the Agent Development Kit (ADK) to build graph-based workflows and coordinator-specialist patterns for complex task management.

A2A and MCP Interoperability: Connect agents across organizations and data systems using Agent-to-Agent (A2A) and Model Context Protocol (MCP) standards.

Atomic Agent Blueprints: Access pre-configured architectures in the Agent Garden to accelerate development and avoid manual design errors.

Check out the blog post for more details.

While most of the AI developer tools focus on coding, one of the challenges that remains is that of the outer loop and bridging the gap between local development and production deployment. The Gemini CLI Extension for CI/CD is a tool that is designed to do exactly that. Using the Model Context Protocol (MCP), the extension integrates with Gemini CLI, Claude Code, and Antigravity to automate both “inner loop” tasks, like rapid deployment to Cloud Run via buildpacks, and “outer loop” requirements, such as generating cloudbuild.yaml files and provisioning infrastructure. Key technical features include a pre-deployment security scan to prevent secret leakage, an automated application analysis for containerization, and the ability to provision Artifact Registry repositories and Cloud Build triggers through natural language prompts. For more details, check out the blog post.

Containers and Kubernetes

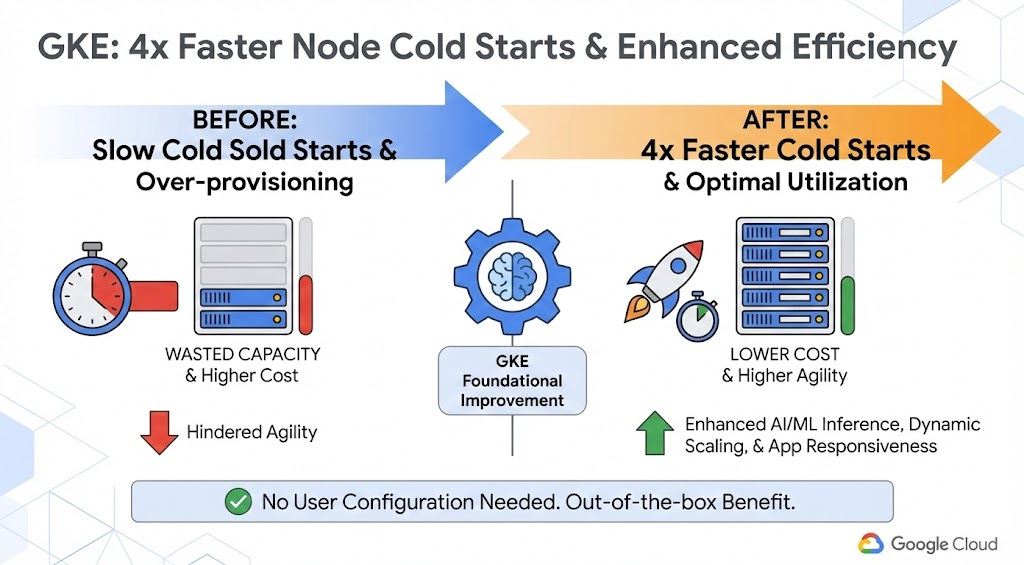

Google Cloud has introduced an architectural update to Google Kubernetes Engine (GKE) that reduces node startup times by up to 4x to address cold-start latency. This improvement is achieved by reworking node provisioning logic through a combination of intelligent compute buffers, fast-starting virtual machines, and a control plane that allows for instant VM resizing without reboots. These technical changes aim to reduce the need for over-provisioning and improve the scaling of AI inference and batch processing workloads. The feature is currently available for GKE Autopilot and specific NVIDIA GPU hardware, including L4, A100, RTXPRO6000, and H100 nodes, as well as general-purpose compute. For more details, check out the blog post.

Security and Identity

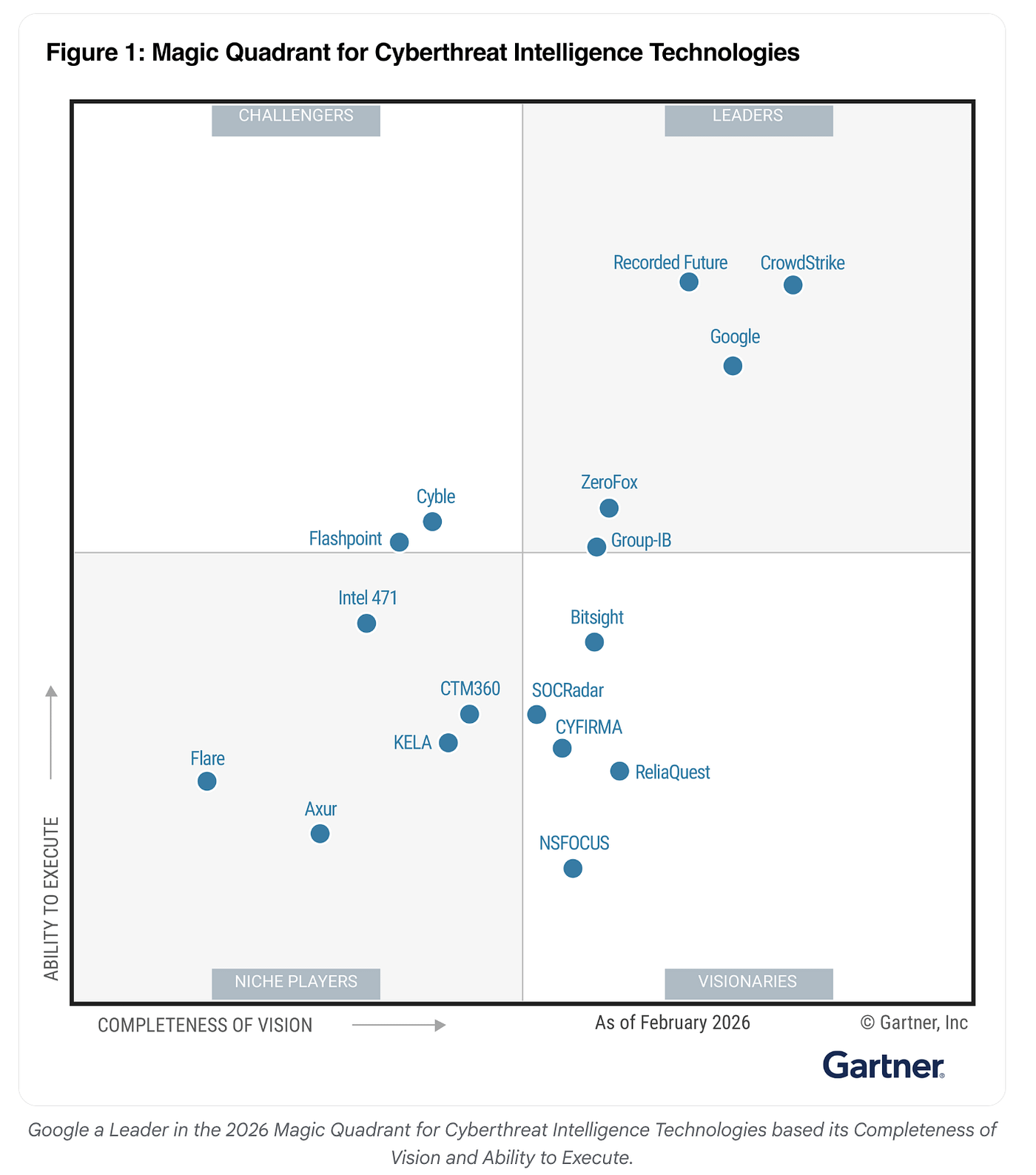

Gartner has named Google a Leader in the 2026 Magic Quadrant for Cyberthreat Intelligence Technologies. You can download the full 2026 Gartner® Magic Quadrant™ for Cyberthreat Intelligence Technologies here.

The second Cloud CISO Perspectives for April 2026 is out. This issue discusses a strategy centered on multicloud and multi-AI environments to address modern security needs. Key technical highlights include the use of Gemini-powered security agents that have reduced alert triage times from 30 minutes to 60 seconds and a new dark web intelligence capability with 98% accuracy. The issue also discusses features in the new Gemini Enterprise Agent Platform, that has an Agent Gateway for identity management, Model Armor for prompt sanitization, and automated Agent Identity verification.

With the Wiz acquisition now complete and highlighted at Google Cloud Next 2026, most practitioners are looking forward to understand how it all comes together under a common platform. In the first Cloud CISO Perspectives for May 2026, the post highlights how the collaboration affects multicloud security and it discusses a strategy centered on developer enablement and automated defense. The collaboration combines Wiz’s cloud telemetry with Google Cloud AI logic to move toward near real-time vulnerability resolution and self-healing infrastructure.

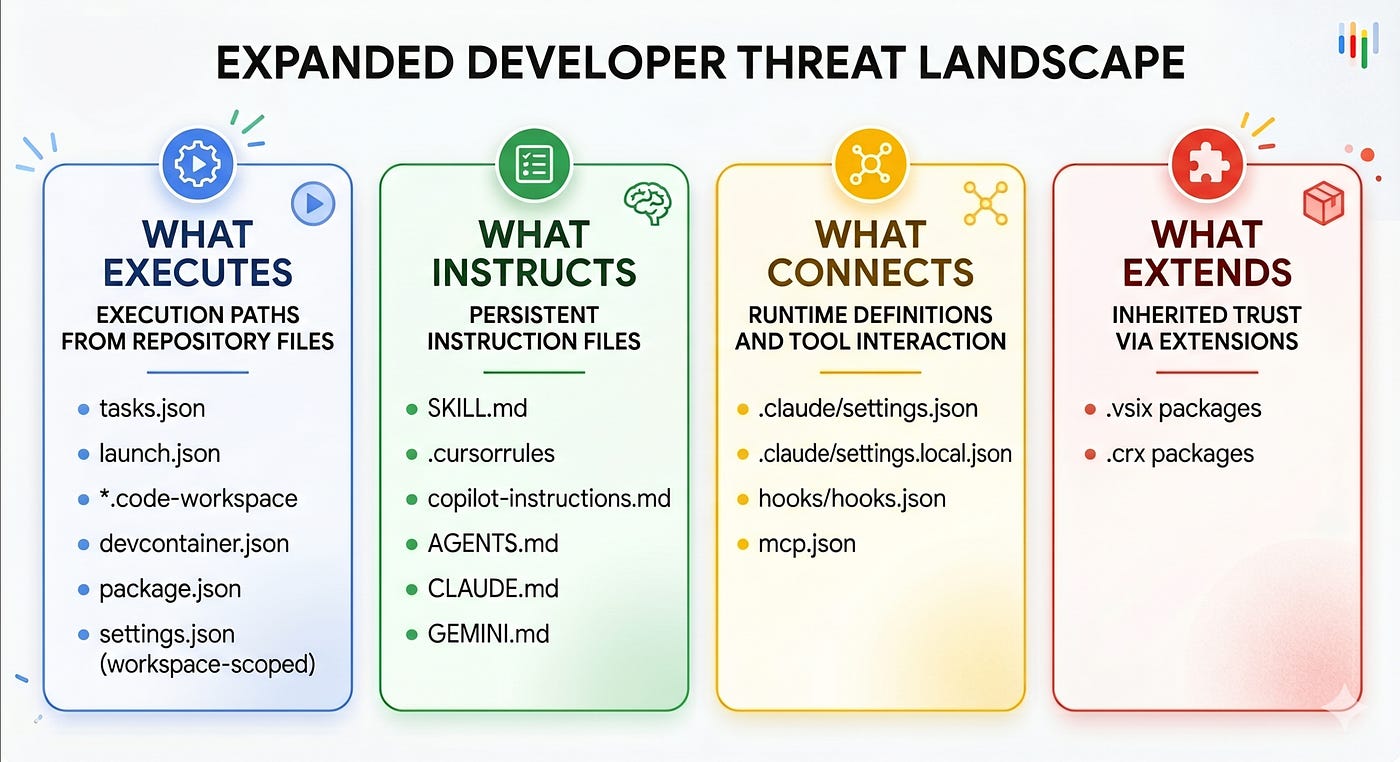

If you are looking to understand the evolving security risks for AI coding agents, this article explains how the attack surface has expanded beyond source code to include the files these agents trust. Threat actors are now targeting repository-level artifacts like tasks.json, settings.json, and Skill.md files to execute code, exfiltrate API keys, or redirect traffic to malicious endpoints by manipulating the agent’s semantic intent. Because these files often use natural language or valid configurations, they bypass traditional signature-based scanners. To counter this, Google Cloud highlights the use of VirusTotal Code Insight to perform semantic analysis, allowing defenders to identify high-risk instructions and hidden supply-chain threats within developer environments.

Infrastructure

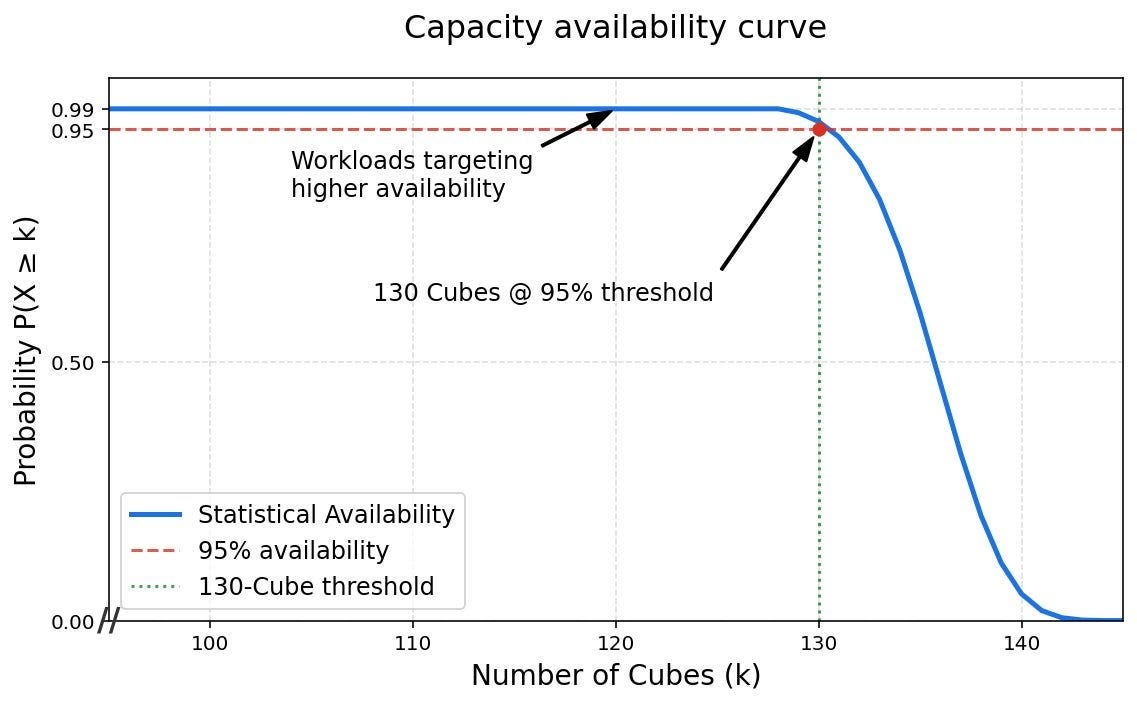

Google Cloud has introduced a cluster-level reliability framework for Tensor Processing Units (TPUs) designed to support the training of trillion-parameter AI models.

Storage

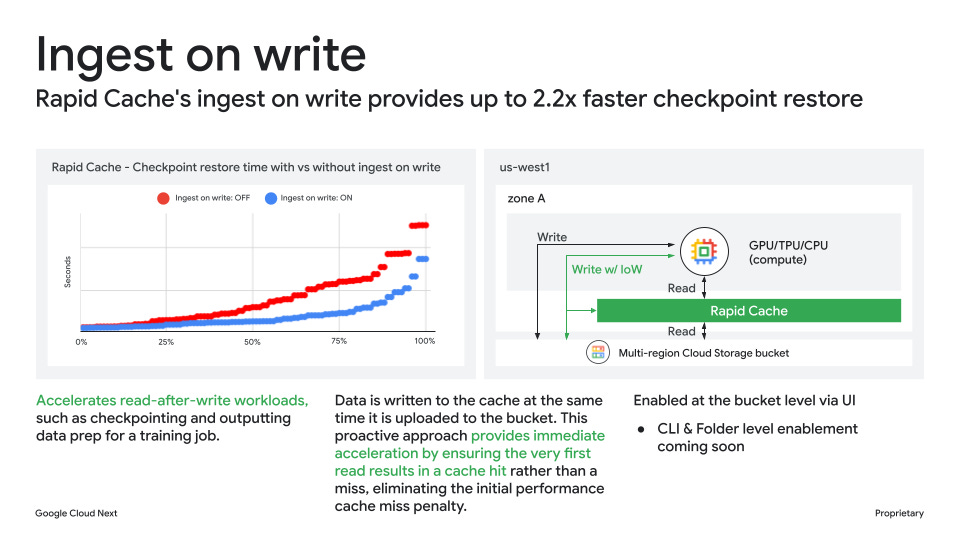

If you are looking to address storage bottlenecks in AI and machine learning workflows, you now have Cloud Storage Rapid, a suite of high-performance object storage capabilities designed for data-heavy workloads. This family includes Rapid Bucket, a zonal storage option that provides sub-millisecond latency and up to 15 TB/s throughput to keep accelerators like GPUs and TPUs saturated, and Rapid Cache, which increases read bandwidth for existing buckets without requiring code changes. Check out the blog post.

Write for Google Cloud Medium publication

If you would like to share your Google Cloud expertise with your fellow practitioners, consider becoming an author for Google Cloud Medium publication. Reach out to me via comments and/or fill out this form and I’ll be happy to add you as a writer.

Stay in Touch

Have questions, comments, or other feedback on this newsletter? Please send Feedback.

If any of your peers are interested in receiving this newsletter, send them the Subscribe link.