Google Cloud Platform Technology Nuggets - April 16-30, 2026

Welcome to the April 16–30, 2026 edition of Google Cloud Platform Technology Nuggets. The nuggets are also available on YouTube.

This edition primarily consists of announcements from Cloud Next ’26.

Google Cloud Next 2026 — A Recap

Google Cloud Next 2026 was bigger than before and saw Google Cloud share a vision for full AI enablement across all of the services. We will highlight specific announcements in the respective sections in this newsletter but if you want a single post that highlights the key announcements, then look no further than 260 things we announced at Google Cloud Next ’26 — a recap post.

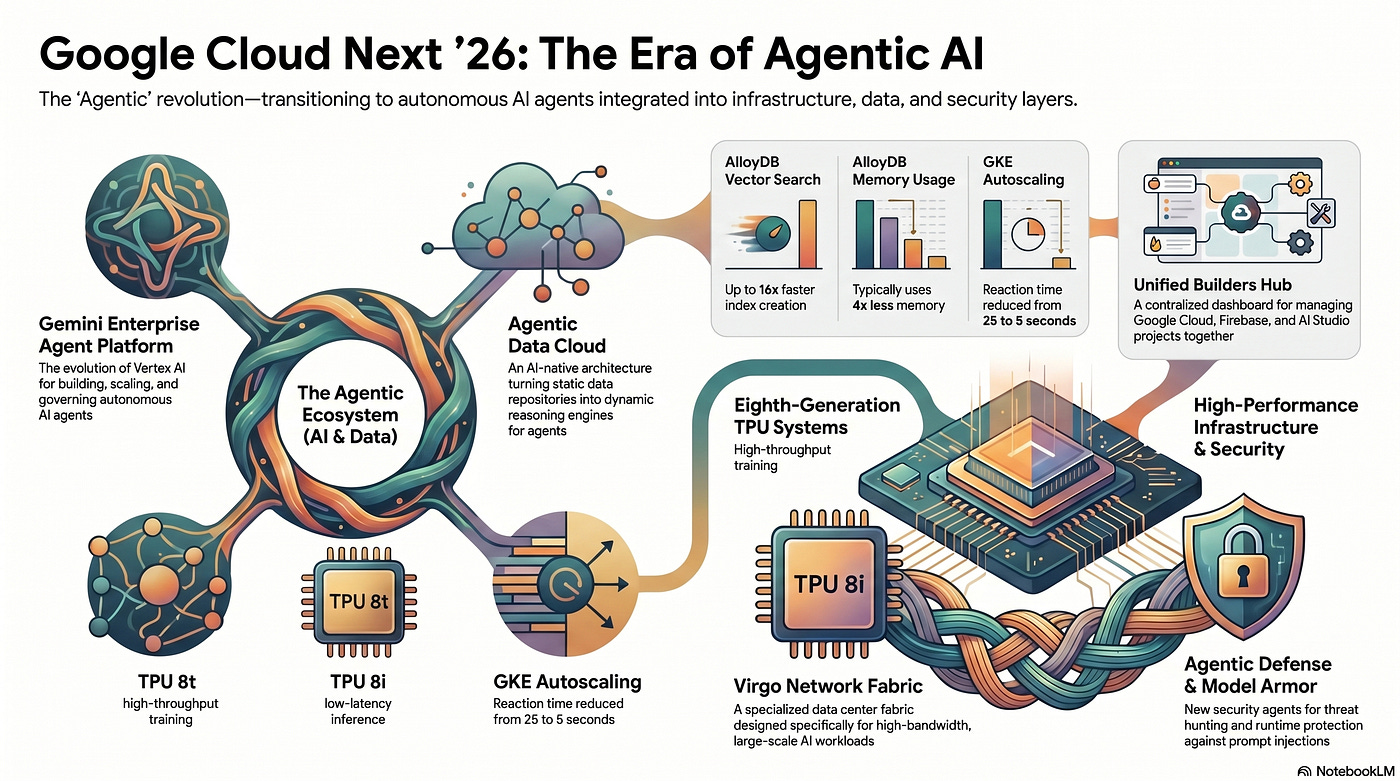

The Day 1 recap of Google Cloud Next ’26 focused on moving AI into production through a unified stack of infrastructure, data, and agents. Google Cloud introduced the Gemini Enterprise Agent Platform for building and governing AI agents, alongside specialized TPU 8t and 8i chips designed for high-scale training and cost-effective inference. The transition to an Agentic Data Cloud was announced to help ground agents in business data, while new Agentic Defense tools and integrations with Wiz were highlighted to automate security operations. Check out the post.

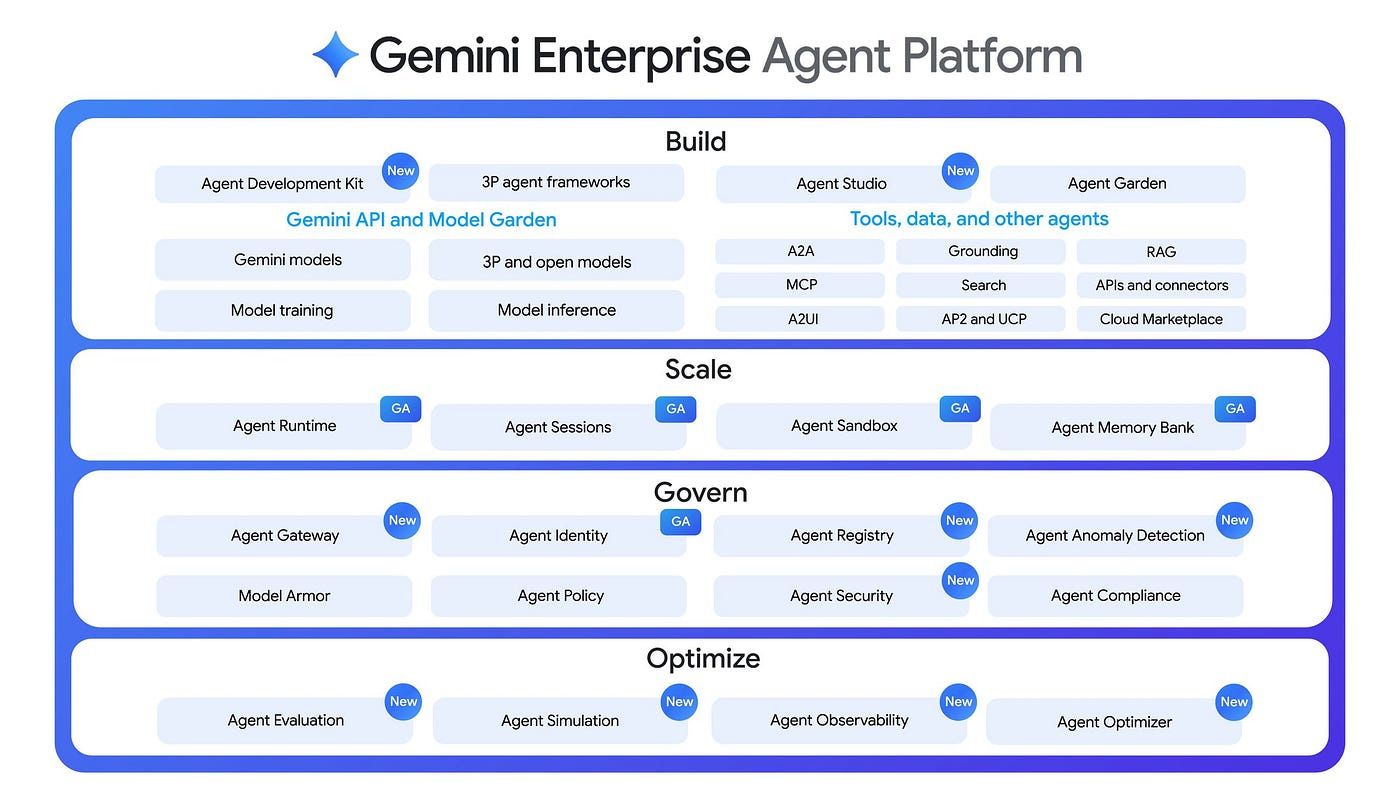

The developer keynote at Google Cloud Next ’26 focused on the Gemini Enterprise Agent Platform, a set of tools designed for building, scaling, and governing autonomous AI agents. Key technical highlights from the session included:

Agent Development Kit (ADK) and Runtime

Memory and Persistence: Use of Agent Platform Sessions and Memory Banks to allow agents to retain knowledge and optimize performance over time.

Debugging and Observability: Features like Agent Runtime trace view and Gemini Cloud Assist for investigating reasoning paths and tool calls.

Check out the blog post for more details.

AI and Machine Learning

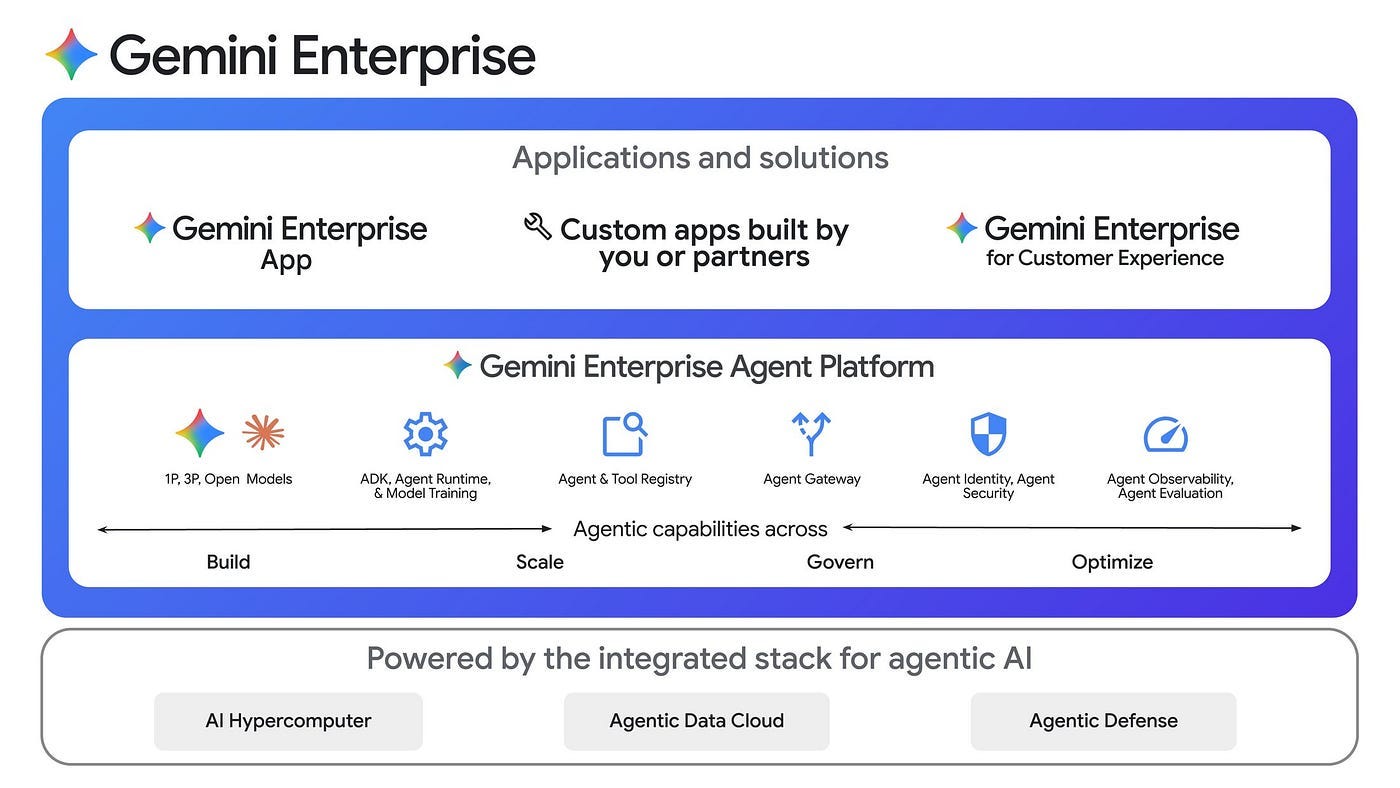

At Cloud Next’26, Google expanded Gemini Enterprise portfolio that includes several key components:

Gemini Enterprise Agent Platform: This is the new developer platform and evolution of Vertex AI. This includes the models, development and tuning services.

Gemini Enterprise app: This lets teams discover, create, share, and run AI agents in a single, secure environment. The Gemini Enterprise app is built on the Agent Platform, meaning all governance, security, and identity capabilities come standard, and it integrates with your enterprise data

An open partner ecosystem to discover and deploy a wide range of third-party agents , all within a secure and governed environment.

If you are investing in the Google Cloud Agent story, its time to relook at the Gemini Enterprise Agent Platform, that is now a comprehensive environment designed to evolve Vertex AI by integrating model selection with new capabilities for agent orchestration, security, and DevOps. Technical teams can build agents using the visual Agent Studio or the code-first Agent Development Kit (ADK), which now supports graph-based frameworks for multi-agent networking. To handle production scaling, the platform features a re-engineered Agent Runtime for sub-second starts and Memory Bank for long-term context retention across multi-day workflows. Governance is managed through Agent Identity for auditable trails and Agent Gateway for centralized policy enforcement, while optimization tools like Agent Simulation and observability traces allow developers to debug and refine reasoning logic. For more details, check out the blog post.

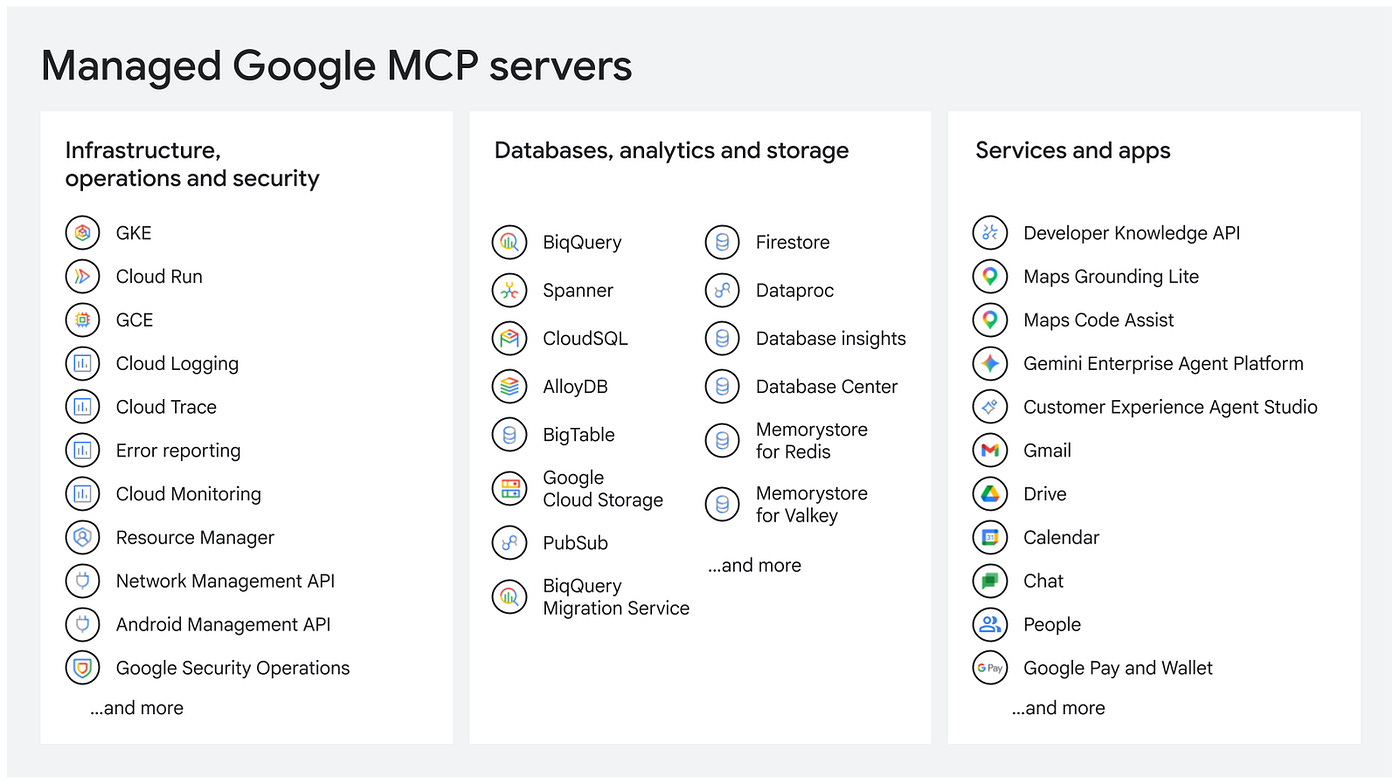

More than 50 fully managed Model Context Protocol (MCP) servers across Google Cloud services are now available to your AI agents. Key technical features include centralized discovery via an Agent Registry, fine-grained access control through Cloud IAM, and in-line safety protections with Model Armor. Developers can utilize these servers to enable agents to perform actions across databases like Spanner and BigQuery, manage infrastructure on GKE, and access productivity tools in Workspace. The platform also includes Cloud Audit Logs for monitoring agentic actions and troubleshooting. For more details, check out the blog post.

Claude Opus 4.7 is available on Vertex AI. This model update features improved performance in coding, instruction following, and handling ambiguous prompts, alongside enhanced vision capabilities for processing high-resolution images of documents and charts.

In additional Claude stuff on Google Cloud, you now have multi-region endpoints for Claude on Vertex AI, currently available in public preview for the U.S. and EU geographies. This helps developers meet data residency requirements, automatically distribute traffic across multiple regions within a single geography, providing a middle ground between low-latency regional endpoints and high-capacity global endpoints. For more details, check out the blog post.

Gemini 3.1 Flash TTS, a text-to-speech model is now available in preview on Google AI Studio and Vertex AI. It provides granular control over synthetic voice output. The model supports over 70 languages and 30 prebuilt voices, utilizing a framework of more than 200 inline audio tags (pacing, expressive, pause, etc) to guide vocal delivery directly within the text prompt. Check out the announcement post that includes a detailed prompting guide.

Data Analytics

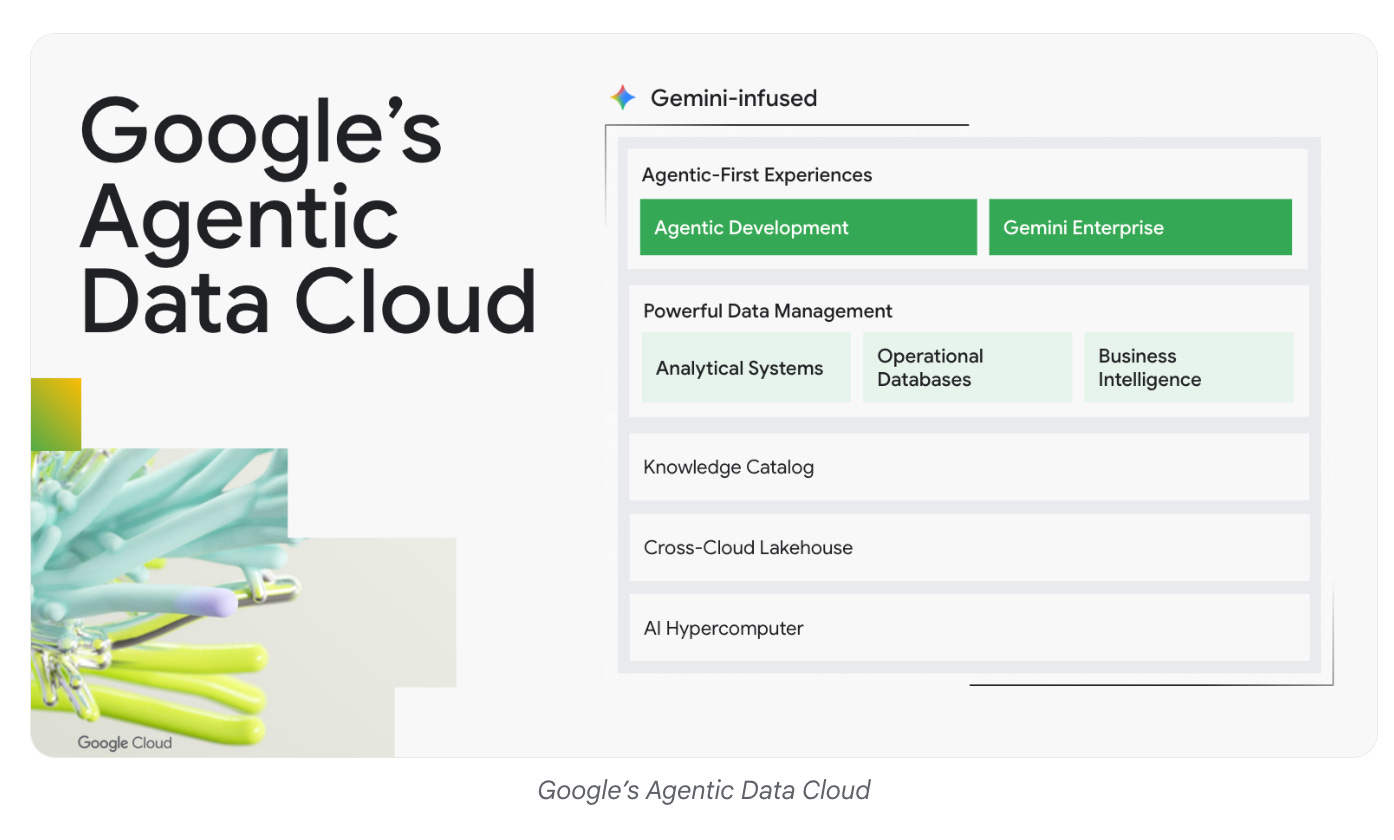

Agentic Data Cloud was one of the big themes of Cloud Next ’26. As the blog post states, it is an AI-native architecture that evolves the enterprise data platform from a static repository into a dynamic reasoning engine. It closes the gap between thinking and doing, allowing AI agents to act on your business data and context.

To understand the above better, it is best to think of 3 areas as the blog post mentions:

A universal context engine that provides agents with trusted business context to drive higher accuracy. A key component here is the Knowledge Catalog, which maps and infers business meaning across your entire data estate, using a framework of aggregation, continuous enrichment, and search. Check out this blog post to learn more.

Agentic-first practitioner experiences to evolve the role of data practitioners and developers as orchestrators of agents. Key to this is Google Cloud Data Agent Kit (available in Preview). Rather than introducing a new interface, we now have a portable suite of skills, tools, environment-specific extensions, and built-in plugins, that developers can then use from the environments of their choice. The kit include Data Science and Data Engineering Agents that plug into existing tools to help manage pipelines and more.

An AI-native, cross-cloud lakehouse that eliminates data silos by connecting your entire data estate. The foundation of this update is the integration of fully managed Apache Iceberg storage, which enables read and write interoperability across BigQuery, Managed Service for Apache Spark, and third-party engines. For more details, check out the blog post.

The Agentic Cloud is also explained in more detailed in the blueprint guide over here.

Databases

While there are several overlaps now between Databases and Data Analytics, when it comes to the Agentic Data Cloud, here is a summary of some of the announcements around Databases:

Launch of Spanner Omni for multi-cloud and on-premises deployments, and the Spanner Columnar Engine for faster analytical queries on live data.

Fully-managed Model Context Protocol (MCP) servers connect models to databases like AlloyDB and Firestore.

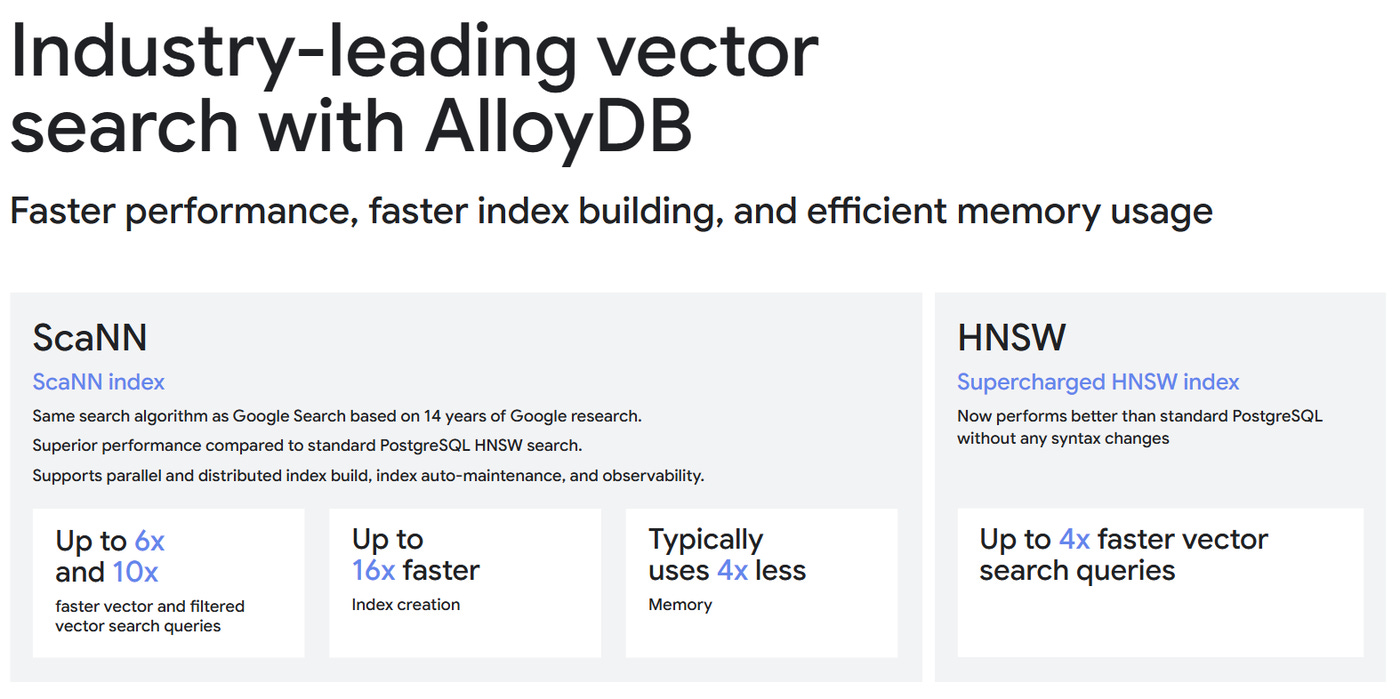

Performance enhancements feature AlloyDB scaling to 10 billion vectors, a new Bigtable in-memory tier for sub-millisecond latency, and native full-text search in Firestore.

New federation and reverse ETL capabilities allow AlloyDB to query data directly from BigQuery and Apache Iceberg without manual data movement.

For more details, check out the blog post.

Storage

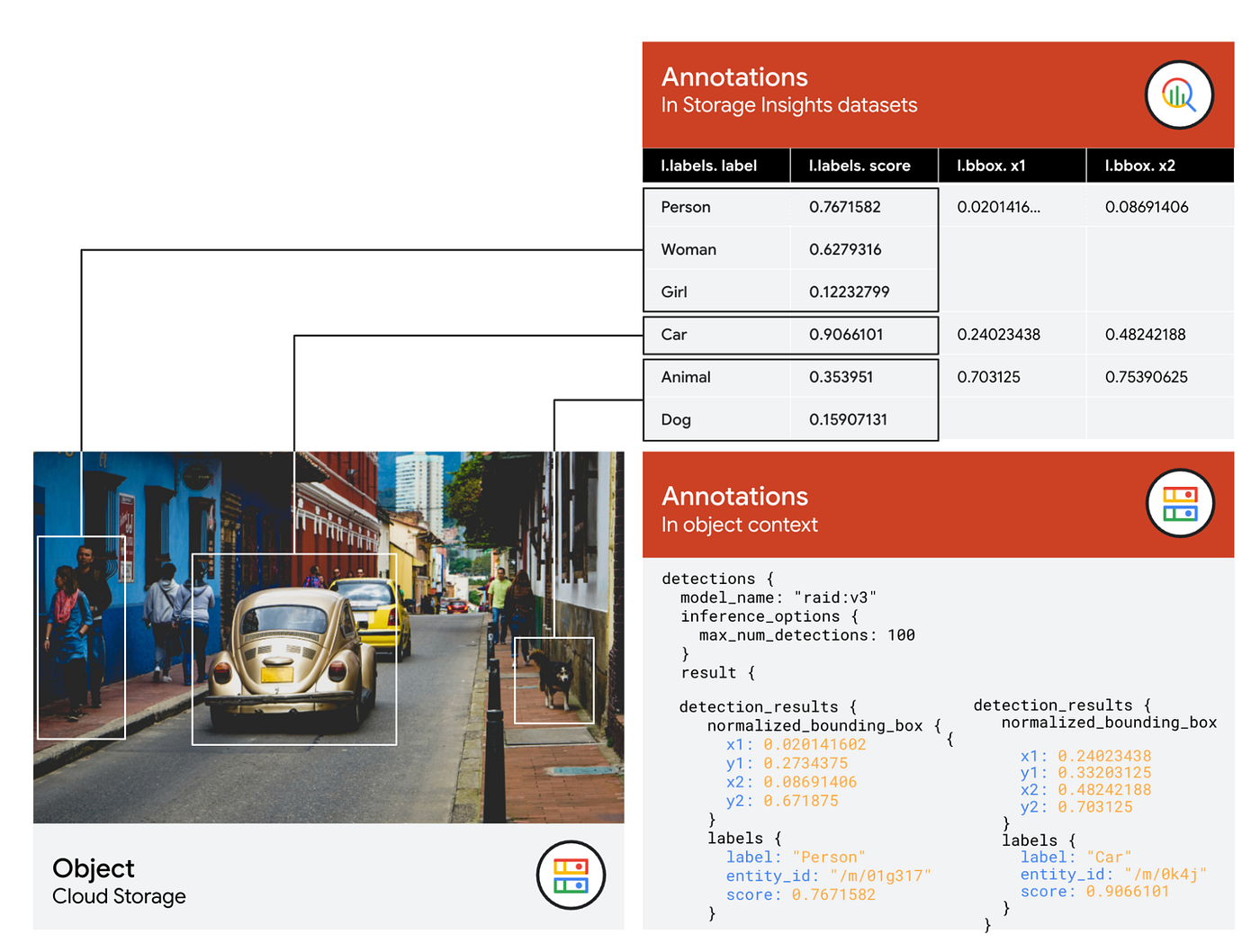

Often overshadowed by other announcements, the Storage portfolio had some key updates. The Cloud Storage Rapid family now includes Rapid Bucket, which provides high-bandwidth, low-latency object storage via gRPC and S3-compatible APIs, and Rapid Cache for accelerating read throughput and checkpoint restores. Smart Storage capabilities now include automated metadata annotations and an MCP server to help AI agents process unstructured data without manual pipelines. Additionally, Storage Intelligence adds new dashboards for cost and security monitoring. For more details, check out the blog post.

Security and Identity

Key security updates at Next ’26 focused on protecting AI workloads and cloud infrastructure. This includes the development of three new agents for Google Security Operations designed for threat hunting, detection engineering, and third-party context analysis. Through Wiz, the platform now provides visibility into AI-native development with an AI-Bill of Materials (AI-BOM) to track frameworks and models, alongside tools to secure AI-generated code. New governance features like Agent Identity and Agent Gateway enable access management and policy enforcement for autonomous agents, while Model Armor provides runtime protection against prompt injections and data leakage. Check out the Security announcements in detail.

Also announced was Google Cloud Fraud Defense, a new trust platform specifically designed for the agentic web and touted as the next evolution of reCAPTCHA.

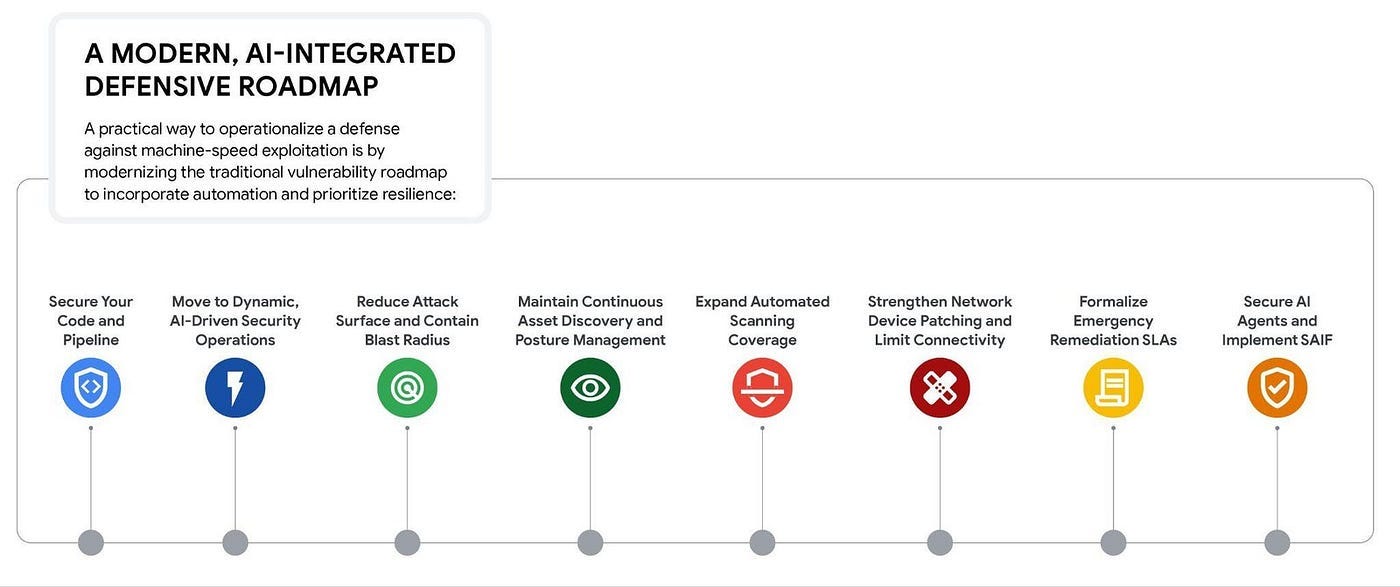

Machine Learning models are accelerating the exploitation lifecycle. What should enterprises do to adopt? Here is a key and essential guide that details a defensive roadmap that includes securing source code through secrets scanning, implementing zero-trust network segmentation, and maintaining continuous, automated asset discovery to eliminate blind spots. For more details, check out the blog post.

Developers & Practitioners

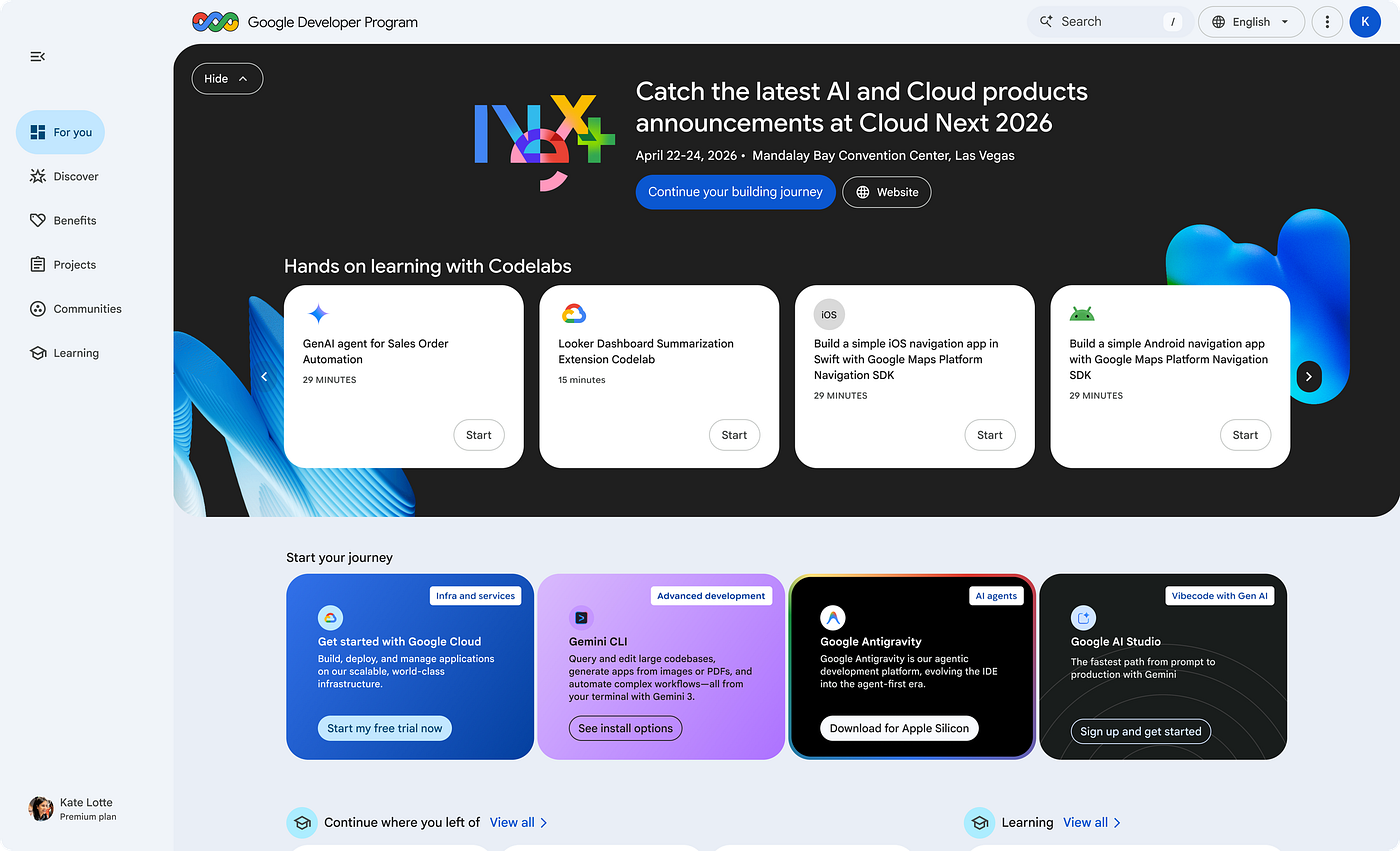

Google Cloud has introduced the Builders Hub within the Google Developer Program, a centralized service designed to simplify the development process by providing a single entry point for projects and resources. This platform features a unified project dashboard that allows developers to view and manage Google Cloud, Firebase, and AI Studio projects in one location, removing the need to switch between different consoles. The hub includes an integrated workbench where users can access Google Cloud credits directly within Codelabs to test environments immediately. Additionally, it provides personalized learning paths based on a user’s specific tech stack, a discovery engine for local community events, and a system to earn and display digital badges for technical milestones. For more details, check out the blog post.

Google has launched an official Agent Skills repository to provide AI agents with compact, “agent-first” documentation for technical tasks. These skills are designed to reduce context bloat by allowing agents to load specific expertise, such as BigQuery, GKE, or security best practices , only when needed. Check out the post.

Containers and Kubernetes

There have been several updates to GKE at Next ’26 designed to support large-scale AI and agentic workloads. Key technical releases include GKE Agent Sandbox, which uses gVisor kernel isolation to execute autonomous agents securely with low latency, and GKE hypercluster, a control plane capable of managing millions of accelerators across multiple regions while maintaining pod-level isolation. For inference, GKE now features a Predictive Latency Boost for real-time capacity routing and Automatic KV Cache storage tiering across RAM, Local SSD, and GCS to reduce memory bottlenecks. Additionally, new Reinforcement Learning (RL) capabilities such as the RL Scheduler and RL Sandbox aim to improve accelerator utilization during sampling and training steps. Finally, intent-based autoscaling provides native custom metrics support for the Horizontal Pod Autoscaler, reducing reaction times for infrastructure elasticity from 25 to 5 seconds. For more details, check out the blog post.

Infrastructure

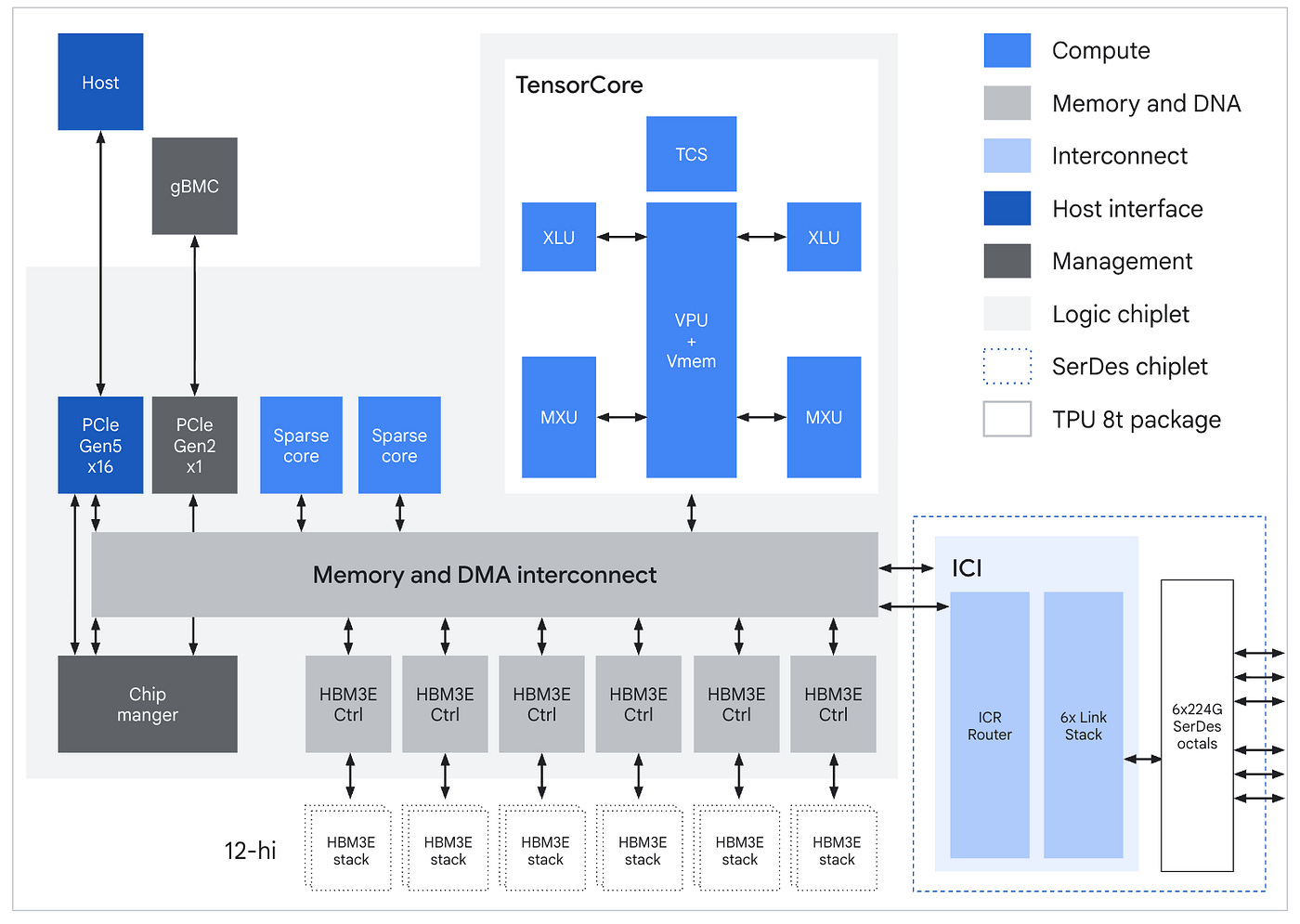

One of the key announcements in AI infrastructure at Next ’26 was the introduction of the eighth-generation TPU systems: the TPU 8t for high-throughput training and the TPU 8i for low-latency inference. In addition to that, we now have A5X bare metal instances (powered by NVIDIA Vera Rubin NVL72), Axion N4A VMs (powered by our custom Axion Arm-based CPUs) and Google Compute Engine 4th generation VMs (powered by Intel and AMD x86-based CPUs). Check out the blog post for more infrastructure announcements.

If you’d like a deep dive into the eighth-generation TPU, check out this architectural deep dive.

Networking

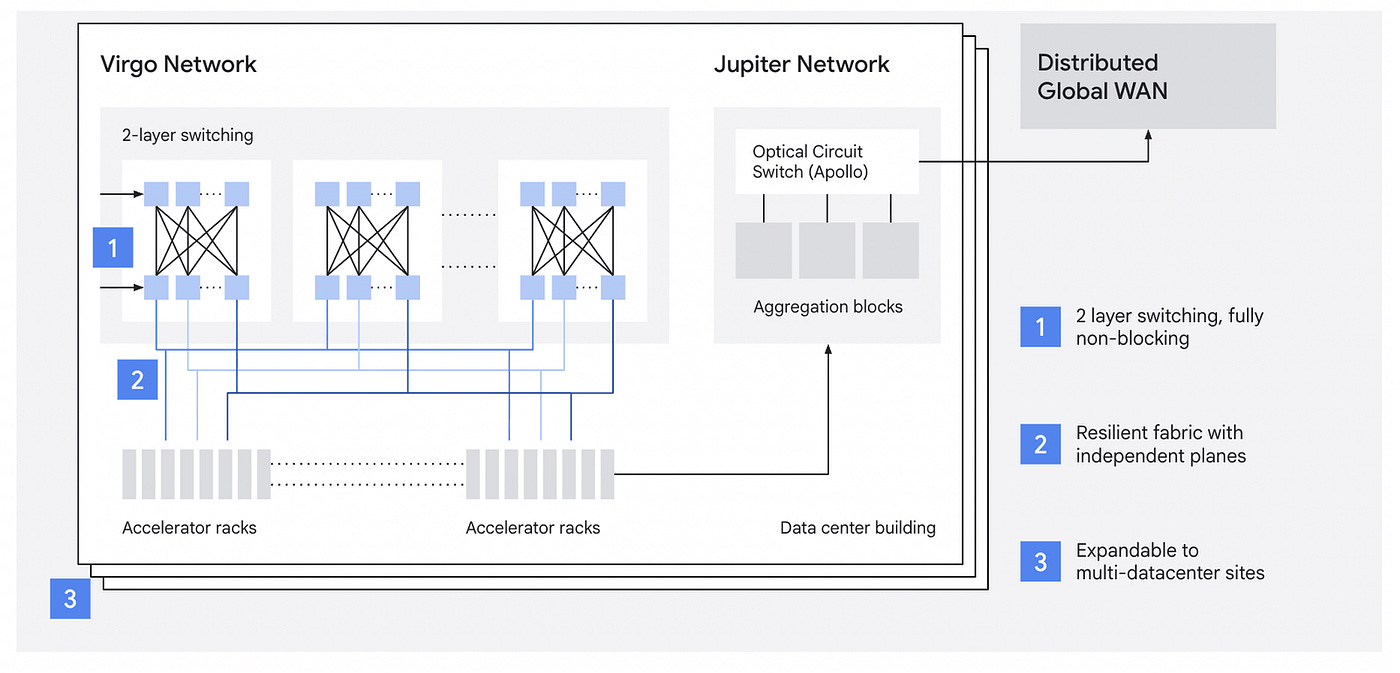

Google Cloud has introduced Virgo Network, a specialized scale-out data center fabric designed to meet the high bandwidth and low latency requirements of large-scale AI workloads. To address the limitations of traditional networks, this architecture utilizes a flat, two-layer non-blocking topology and a multi-planar design that separates east-west accelerator traffic from north-south storage and compute traffic. Check out the blog for more details.

Cross-cloud network has seen several updates and some of them include:

Introduction of Agent Gateway to manage agentic protocols (MCP, A2A) and ambient networking for GKE and Cloud Run, which provides service-to-service connectivity and zero-trust access without sidecar proxies.

Cloud Network Insights provides performance and digital experience monitoring, integrated with Gemini Cloud Assist for natural language troubleshooting.

GKE Inference Gateway now supports multi-region scaling for failover and predictive latency boost for optimized request routing.

Cloud NGFW adds an advanced malware sandbox and Cloud Armor integrates Google Cloud Fraud Defense and adaptive protection for Network Load Balancers.

Check out the blog post for more details.

Serverless

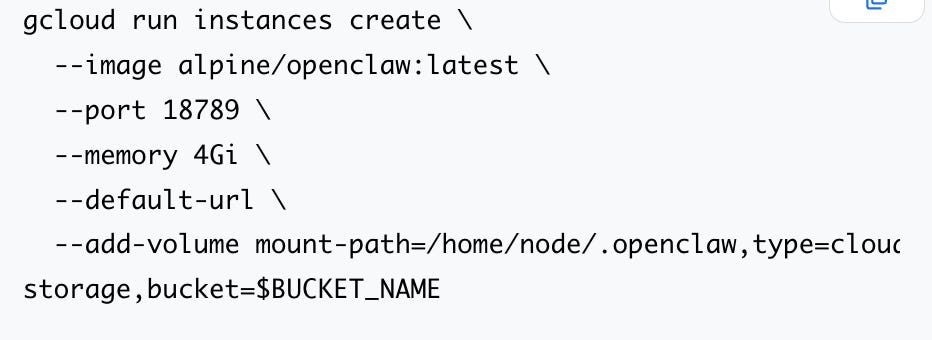

Cloud Run is a popular service with developers and it saw significant enhancements at Cloud Next ’26. Cloud Run introduced a fully-managed Cloud Run MCP Server that helps you manage your deployments. In addition to that, several features centered on AI integration and developer experience.

For AI workloads, Cloud Run now supports NVIDIA RTX PRO 6000 Blackwell GPUs, allows for the creation of individual instances for background agents, and introduces ephemeral sandboxes for isolated code execution.

Developer-focused improvements feature direct SSH access into containers for troubleshooting, the addition of ephemeral disk storage, and upcoming billing caps to manage costs.

For more details, check out the blog post.

Operations

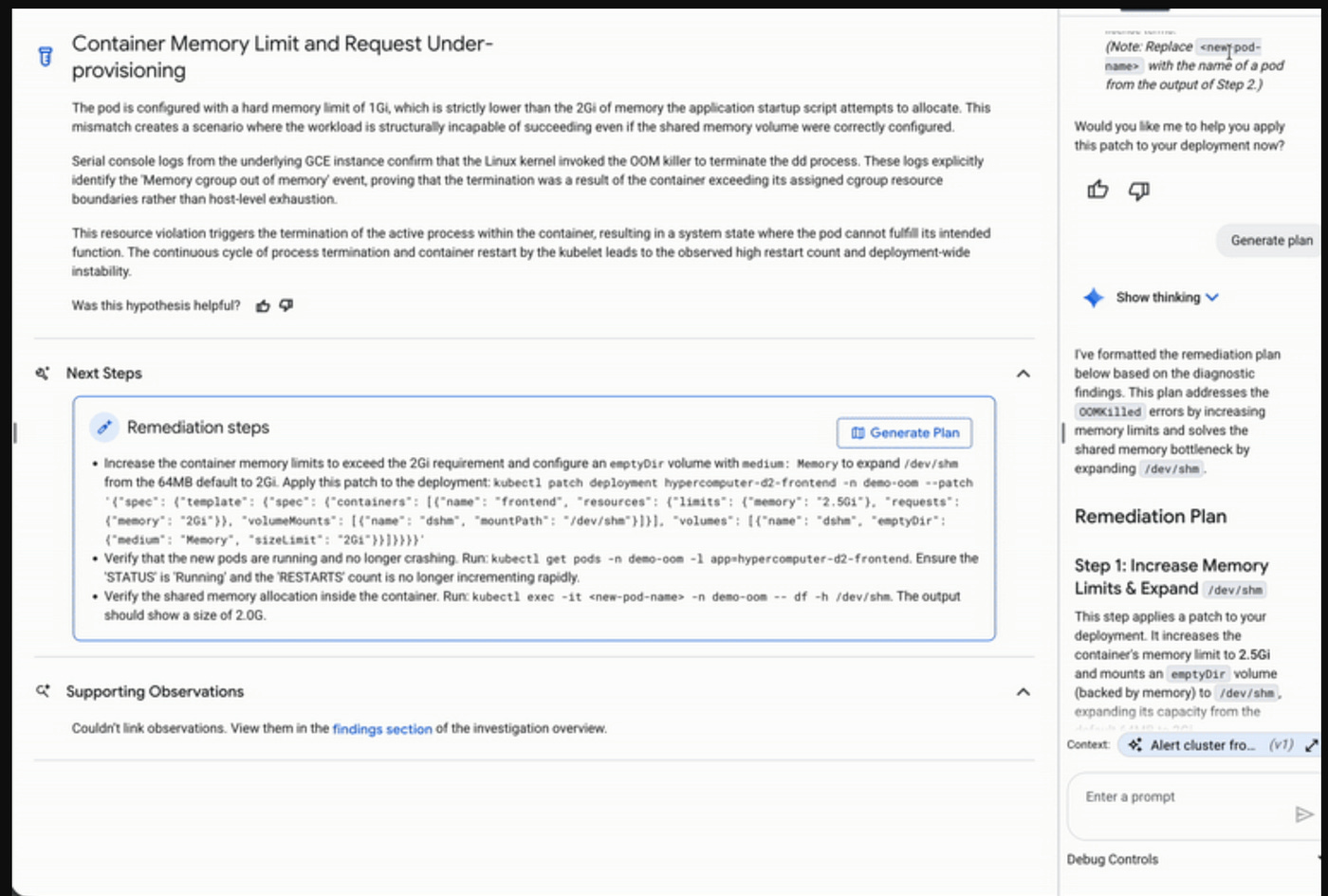

Gemini Cloud Assist has got a lot more proactive compared to its initial announcement last year. It features now a redesigned Application Design Center that converts natural-language intent into visual architectures and deployable Terraform templates while following security and compliance best practices. For troubleshooting, it uses Gemini 3 to correlate logs and metrics across infrastructure and application code to identify root causes and explore hypotheses automatically. In addition to the FinOps agent mentioned earlier, the tool is now accessible via a fully-managed MCP Server. For more details, check out the blog post.

FinOps

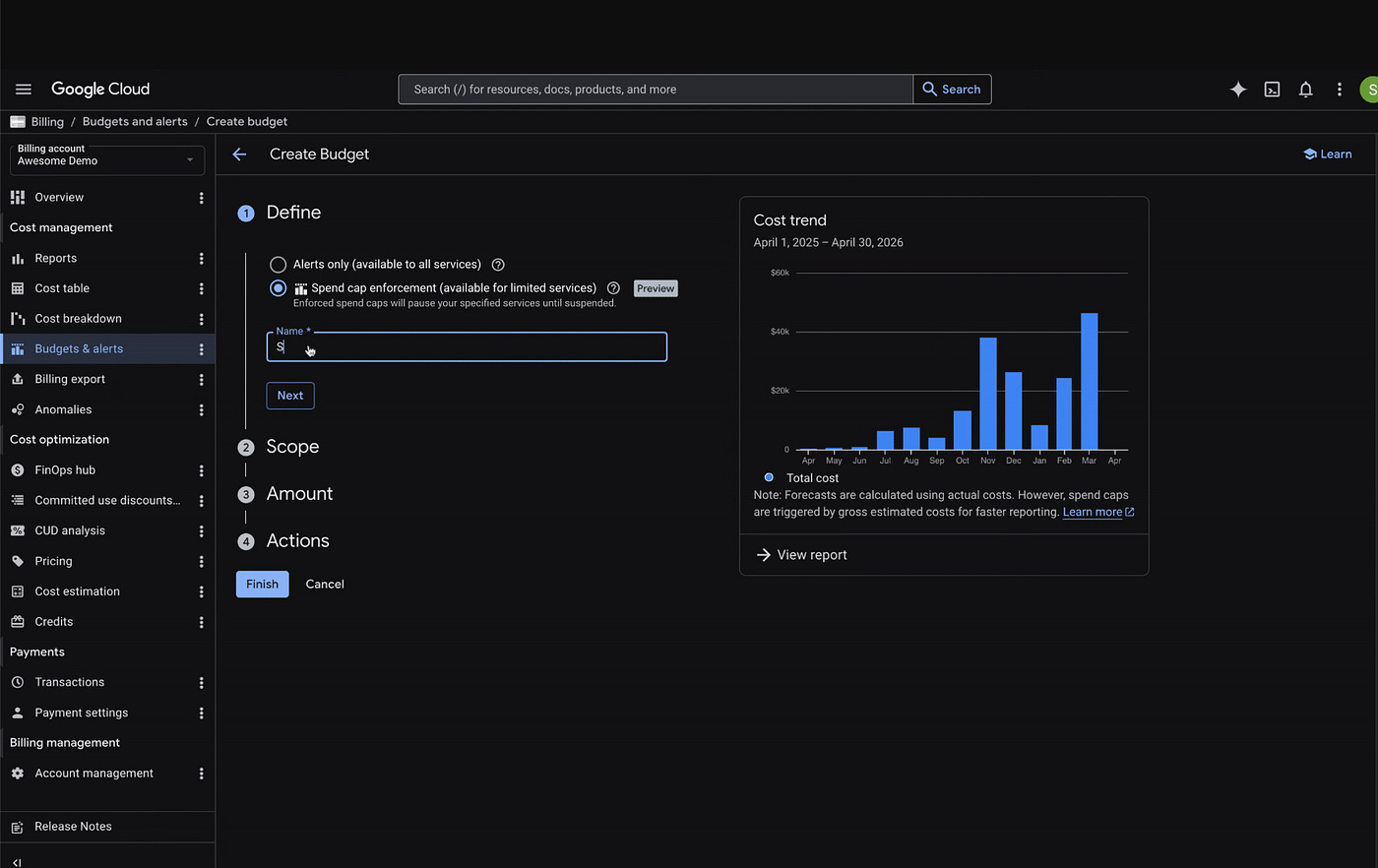

AI-related costs is an important topic. One of the key announcements has been FinOps Explainability agent, which uses Gemini to automatically identify and break down expenses for specific services like Gemini Pro or Flash. Another key thing is to setup limits and stop the project services if a specific spend limit has been set. Towards this there is a private preview of Spend Caps for Google AI Studio, Agent Platform, and Cloud Run. These caps allow users to set project-level budget boundaries that pause API traffic once reached to prevent overspending on specialized hardware. For more details, check out the blog post.

Learning

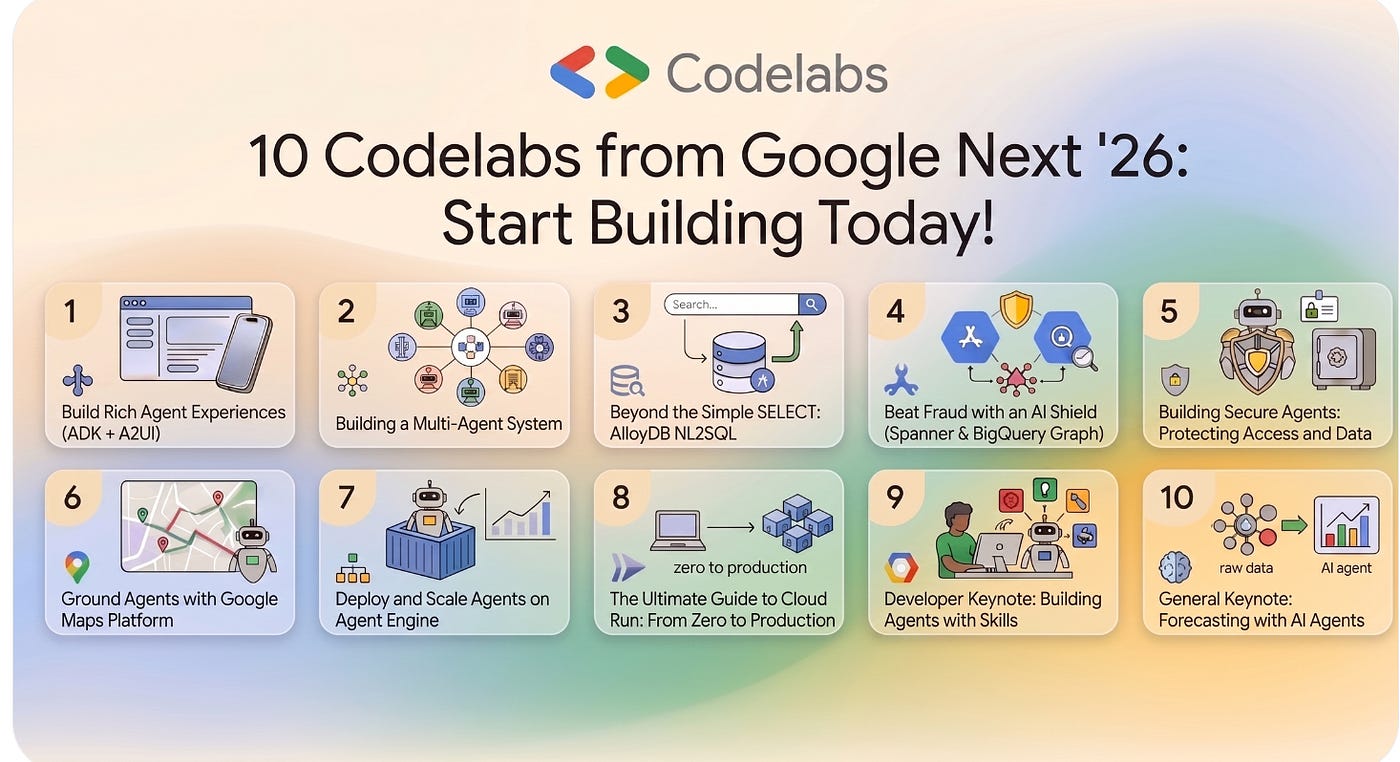

If you are looking to move from high-level AI concepts to building functional agentic systems, here is a a curated list of 10 codelabs from Next ’26 focused on multi-agent orchestration, data grounding, and enterprise security. These technical guides cover building interfaces with the Agent Development Kit (ADK) and A2UI, creating multi-agent architectures, and enabling natural language queries in AlloyDB via NL2SQL. You can also explore real-time fraud prevention using Spanner and BigQuery Graph, securing reasoning engines with Model Armor and IAM, and grounding agents in location data through the Google Maps Platform.

Write for Google Cloud Medium publication

If you would like to share your Google Cloud expertise with your fellow practitioners, consider becoming an author for Google Cloud Medium publication. Reach out to me via comments and/or fill out this form and I’ll be happy to add you as a writer.

Stay in Touch

Have questions, comments, or other feedback on this newsletter? Please send Feedback.

If any of your peers are interested in receiving this newsletter, send them the Subscribe link.